Top advertisers still funding disinformation and conspiracy theories

Posted by Jeremy Spitzberg • Jan 31, 2020 9:26:45 AM

The New York Times recently ran an article in their Opinion section about how programmatic ads from some of our largest and most influential companies are funding RT (né Russia Today) and Sputnik News.

We're going to assume that this was shunted into Opinions because the author, L. Gordon Crovitz, has a financial interest in the matter, as was pointed out by Nandini Jammi, of Sleeping Giants, on Twitter, in her own inimitable style.

Regardless of it's provenance, the article shines a light on yet another way brands are failing to protect themselves from reputational harm online. Our survey, run in conjunction with Trustworthy Accountability Group (TAG), found that advertisers will suffer from bad ad adjacency.

When asked who should be responsible for ensuring ads do not run with dangerous, offensive, or inappropriate content, respondents assigned responsibility broadly, with 70 percent naming the advertiser, 68 percent the ad agency, 61 percent the website owner, and 46 percent the technology provider.

We would hope that these large marketers wouldn't find themselves in these situations. We hope that better education, through our Brand Safety Officer curriculum, as well as the many other industry efforts on these issues, will continue to make brands smarter and safer.

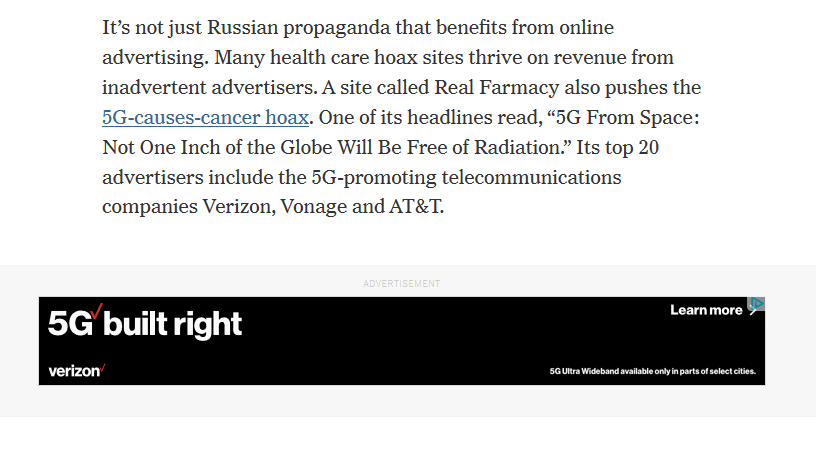

One detail in the article caught our attention. In discussing the disinformation found on RT, the article points out a particular conspiracy theory:

A new theme on RT is that the emerging wireless 5G technology causes cancer. RT also reports that 5G cell towers cause learning disabilities and nose bleeds in children. No research establishes these risks. (Russia is far behind in 5G, which may explain this line of disinformation.) Despite this scaremongering, telecommunications companies that depend on the success of 5G have advertised on RT, including T-Mobile, CenturyLink, Comcast and Vonage. Cisco has advertised on RT, even though it is investing heavily in 5G technology.

It certainly helped catch our eye, as it ran next to an ad for Verizon's 5G!

Now block lists are something we've written a bit about, but it seems as if programmatic ads can't avoid the honey pot of some terms. To get some more understanding of this, we reached out to Jonathan Marciano, Director of Communications at CHEQ, a company doing ad verification via AI, who has published extensively on the topic recently. Here's some of what he had to say:

In a study last year, "Bad Impressions", CHEQ analyzed 70 global iconic brands (including 21 Fortune 500 companies) which served ads on news sites between January 2018 and March 2019. While all of the brands suffered from negative brand placements, especially concerning is that 3% of violations involved companies advertising their regular promotions next to stories detailing unfolding crises at their own company. This included one company serving adverts next to stories about a damaging product recall at their firm, while another brand served promotions to consumers reading about an operations meltdown at their company.

Unsurprisingly, they promote AI as a means to combat the problem. At the risk of running our own infomercial for them (full disclosure, we do not have any financial interest in this, or hold any position on the company or the technology), it is interesting how the software works.

This is where the importance of AI brand safety comes in (for instance CHEQ). AI completes sequences to understand the meaning of the text, in order to identify the exact context of a story, similar to the way a human brain works. This will essentially build up a full picture of the actual story. In contrast, blunt tools like keywords are over or under inclusive.The approach of AI enables a more contextual approach putting brands by understanding the exact meaning of any news source and determining exactly what a piece of content is.

In one example, a fast-food restaurant chain may want to avoid advertising next to content about obesity. Engineers have trained the AI to define obesity as a category, but also trained it to understand sub-terms in context (such as heart disease and diabetes). To uncover if a piece of content is about obesity or not, the technology does not just look at one specific keyword, but rather analyzes how many category subterms are present in the article, and what's the relation between them. This allows the AI to understand if an obesity-related word was randomly present or if the content is about obesity.

In the case of cancer/5G, AI would determine that this article is brand unsafe because of the particles in the story building up that your company is in a story about cancer (blocking it from serving) ,but any company or product linked to cancer would likely be blocked, based on most brand safety guidelines. However in a different scenario a brand may determine that it is happy to have ads served in a story that it considers neutral, such as that cancer rates have been reduced. The particular brand is put in control of what is appropriate for them with a far more strategic degree of sophistication, based on their precise brand safety principles.

However brands choose to ensure their own safety online, we just want to reiterate that education is the key. Brand Safety Officers will have to be aware of all the dangers they face, as well as what tools they have available on their behalf. That education will come from certification like ours, and from a community of like-minded professionals.

Topics: Brand Safety, Brand Safety Officers, Ad Adjacency, Block lists, Disinformation, Brand Suitability

Want To Stay Ahead In Brand Safety?

Sign up for the BSI Newsletter to get our latest blogs and insights delivered straight to your inbox once a month—so you never miss an update. And if you’re ready to deepen your expertise, check out our education programs and certifications to lead with confidence in today’s evolving digital landscape.